AI Roadmap for Today's Enterprise

Introduction

Enterprises possess massive volumes of historical records, user logs, and transactional databases. Originally, this data was not collected for AI purposes; it was stored for compliance, basic analytics, or simple record keeping. Today, leadership teams have ambitious AI-oriented objectives, aiming to transform these static archives into intelligent systems. Organizations failing to bridge this gap will struggle to maintain their market position. The challenge is not a lack of information but the absence of a modern AI foundation.

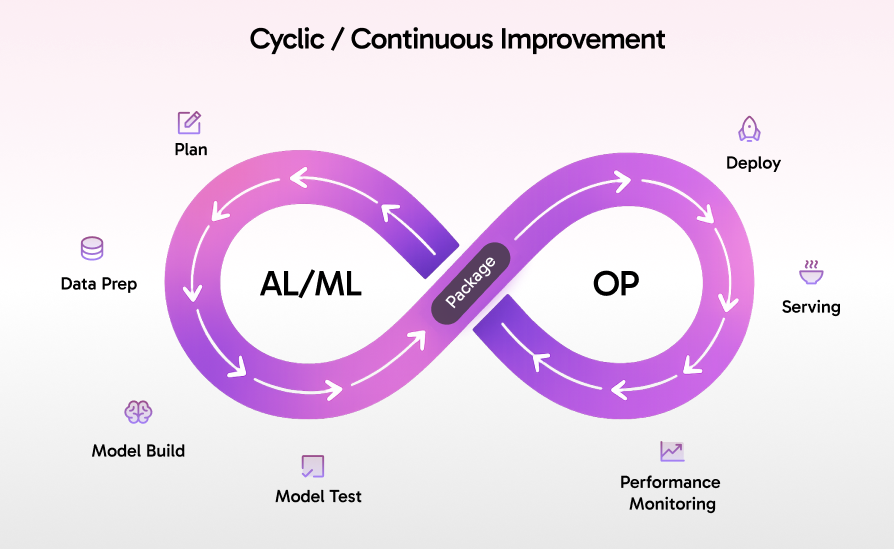

Building these capabilities requires a strategic shift toward continuous building and ops in AI. It is no longer about launching a single predictive model. Instead, it requires adopting a cyclic, continuous improvement methodology. As illustrated in modern AI/ML workflows, the process operates on an infinite loop. Starting with the AI/ML phase, which includes planning, data preparation, model building, and testing, the system is then packaged and transitioned into the operational phase for deployment, serving, and continuous performance monitoring. It involves deploying dynamic systems that evaluate feedback, route queries intelligently, and refine their outputs over time. At Analyze.Agency, we transform disconnected data into generative intelligence because we believe the era of static archives is over. Your business should be empowered to take instant action based on live awareness. With modern AI architectures, your data drives intelligent decisions the moment it is queried.

How It's implemented in the real world

A real-world example of this enterprise transformation is seen at Moderna, which built a fully integrated AI roadmap to connect its clinical data, supply chain logistics, and research archives. Rather than launching isolated predictive tools, they implemented continuous AI pipelines that turn static historical records into a dynamic generative intelligence system, accelerating both drug discovery and operational efficiency across the organization.

Another powerful example is Maersk, which modernized its global operations by transitioning from static shipping logs to a continuous AI-driven infrastructure. By utilizing multi-cloud environments, they actively route live tracking data, weather patterns, and supply chain variables into predictive models, optimizing global fleet movements in real time while maintaining strict data governance. This continuous processing ensures their AI architecture is not just a one-off project but a central, evolving operational layer that adapts to new information. We offer tailored architectural approaches to help your enterprise implement these highly scalable, compliant AI systems safely and efficiently.

Technical Architecture

When it comes to implementing generative AI, there is rarely a single blueprint for success. The technology landscape shifts rapidly, making it difficult to identify robust configurations among fleeting trends. As your transformation partners, we have designed multi-cloud architectures across HyperScalers , Eurostack and hosted solutions to handle complex model routing while ensuring strict security. We utilize the most reliable cloud-native services, building systems that scale effortlessly and comply with strict corporate governance standards.

HyperScaler Architecture

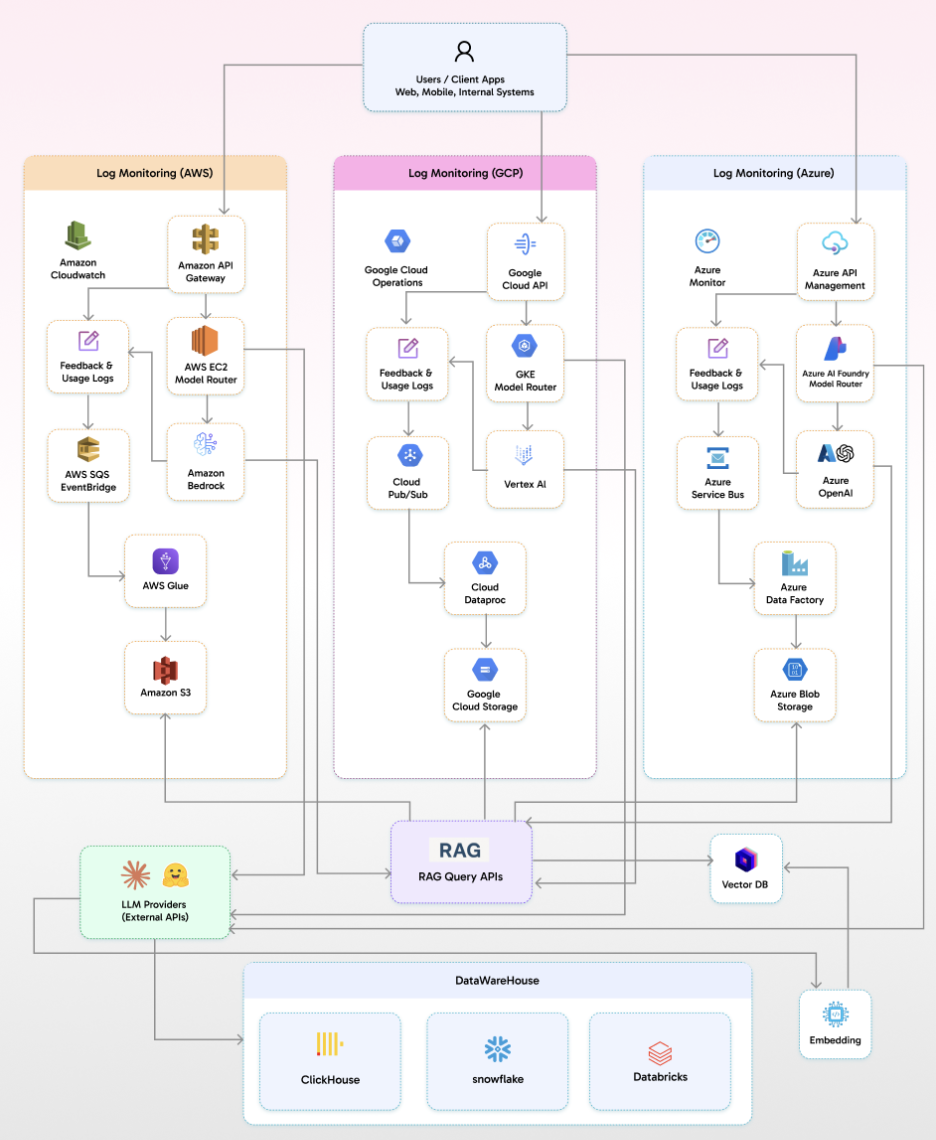

Hyperscale platforms like AWS, GCP, and Azure provide essential building blocks for enterprise AI operations. The transformation process and architectural flow occur in several synchronized layers, beginning with the client entry point where users interact with the system via web, mobile, or internal apps. These requests hit a dedicated RAG Query API before the workflow branches into specialized cloud environments based on the optimal model for the task.

This architecture redefines AI orchestration by transforming each cloud platform into a dynamic, self-optimizing engine. On AWS, the EC2 Model Router intelligently balances workloads between Amazon Bedrock and custom endpoints, ensuring low-latency, cost-effective inference, while feedback loops via CloudWatch, SQS, and EventBridge drive continuous model refinement. AWS Glue further processes raw logs into actionable insights, enabling data-driven optimization. GCP leverages Google Kubernetes Engine (GKE) as a resilient orchestrator, routing requests to Vertex AI with auto-scaling precision, while Cloud Operations and Dataproc go beyond logging they detect anomalies, trigger retraining, and feed insights back into the system for closed-loop improvement. Meanwhile, Azure uses AI Foundry and API Management to create a secure gateway to OpenAI, with Azure Monitor and Service Bus ensuring compliance and traceability, while Data Factory turns usage logs into predictive analytics for proactive resource management.

The true power lies in its unified intelligence layer: logs from all three clouds are normalized and ingested into a centralized VectorDB, enabling semantic search, trend analysis, and cross-platform anomaly detection effectively building a knowledge graph of AI performance. Real-Time RAG (Retrieval-Augmented Generation) APIs further elevate this system by contextualizing every query, enriching AI responses with historical trends, user behavior patterns, and external data from sources like Snowflake or Databricks. This turns every interaction into a data-driven decision point, ensuring your AI isn’t just responsive, but proactively intelligent.

Regardless of the chosen provider, the platform seamlessly interacts with external LLM Providers like the Claude API or open-source models hosted on Hugging Face for specialized processing. Furthermore, the selected architecture relies on centralized DataWareHouses like ClickHouse, Databricks, or Snowflake, feeding into an embedding layer. These embeddings populate the VectorDBs to ground the models in your proprietary data. Concurrently, feedback and usage logs are deposited into your provider's respective cold storage solution, such as Amazon S3, Google Cloud Storage, or Microsoft Azure Blob Storage, to fuel the continuous improvement loop.

EuroStack Architecture

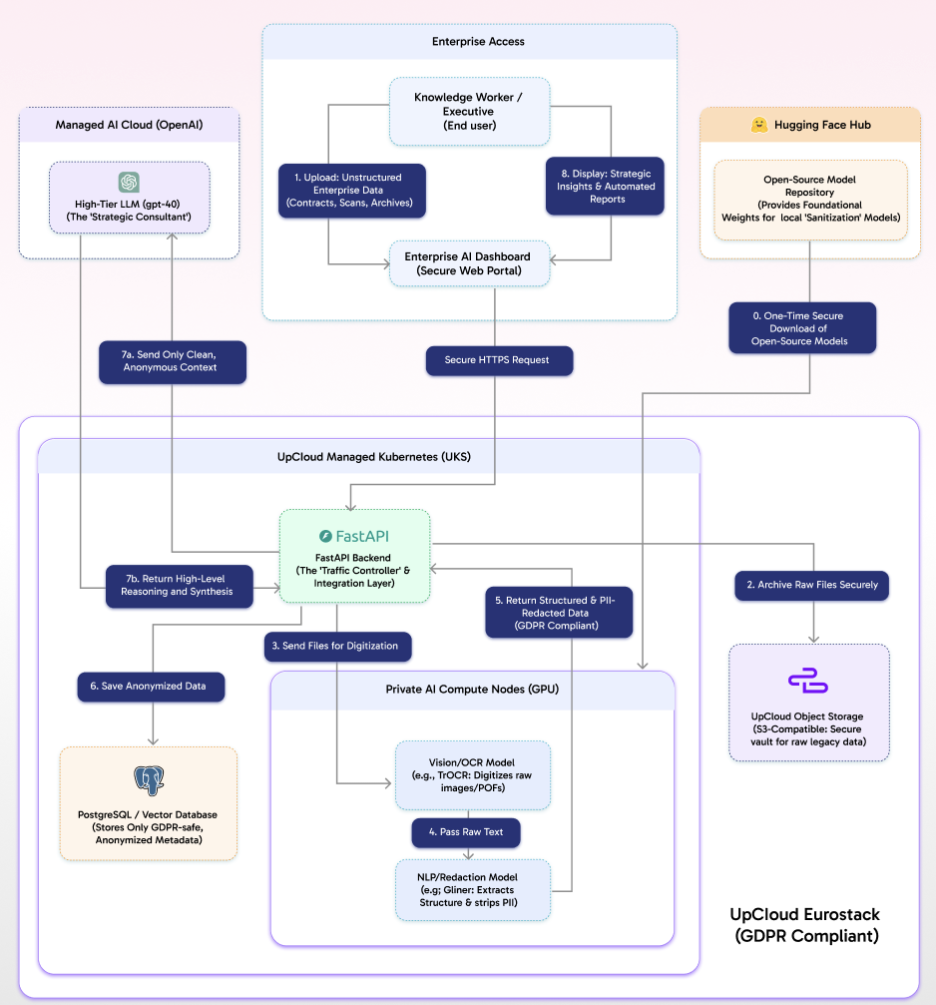

For enterprises operating under strict data sovereignty laws, we utilize a specialized Eurostack architecture that ensures full GDPR compliance by keeping sensitive data strictly within European borders. This infrastructure is anchored on the UpCloud Eurostack, providing a highly secure boundary for enterprise operations. The workflow begins when a knowledge worker or executive uploads unstructured enterprise data, such as legacy contracts or scanned archives, through a secure web portal known as the Enterprise AI Dashboard. These requests are routed via secure HTTPS to a FastAPI backend deployed on UpCloud managed Kubernetes, which acts as the system's traffic controller. Upon receiving the data, the backend immediately archives the raw files in a secure, S3-compatible UpCloud Object Storage vault.

Secure, GDPR-Compliant AI Processing: Bridging Privacy and Intelligence

This architecture is designed to harness the power of advanced AI while strictly adhering to GDPR and data privacy requirements. By leveraging a multi-layered, privacy-first approach, the system ensures that sensitive information never leaves the secure perimeter, yet still enables high-level AI-driven insights.

At the core, private AI compute nodes equipped with GPUs process all unstructured enterprise data such as contracts, scans, and archives within a GDPR-compliant UpCloud Eurostack environment. The workflow begins with a vision/OCR model like TrOCR, which digitizes raw images and PDFs into machine-readable text. This text is then passed to an NLP redaction model such as GLiNER, which meticulously extracts structural elements while stripping all personally identifiable information. The result is structured, anonymized metadata, which is securely stored in a stateful PostgreSQL/Vector Database, ensuring only GDPR-safe data persists.

Once sanitized, the FastAPI backend acts as a secure gateway, forwarding only clean, anonymous context to a high-tier managed AI cloud like OpenAI’s GPT-4o. This external LLM, acting as a strategic consultant, processes the context to generate high-level reasoning, synthesis, and actionable insights without ever exposing raw or sensitive data. The insights are then returned to the backend and presented to the end user via the Enterprise AI Dashboard, delivering strategic reports and automated recommendations in real time.

By combining on-premises privacy preservation with cloud-scale intelligence, this architecture bridges the gap between stringent European data protection and cutting-edge AI capabilities, enabling enterprises to innovate responsibly and securely.

Hosted Solution Architecture

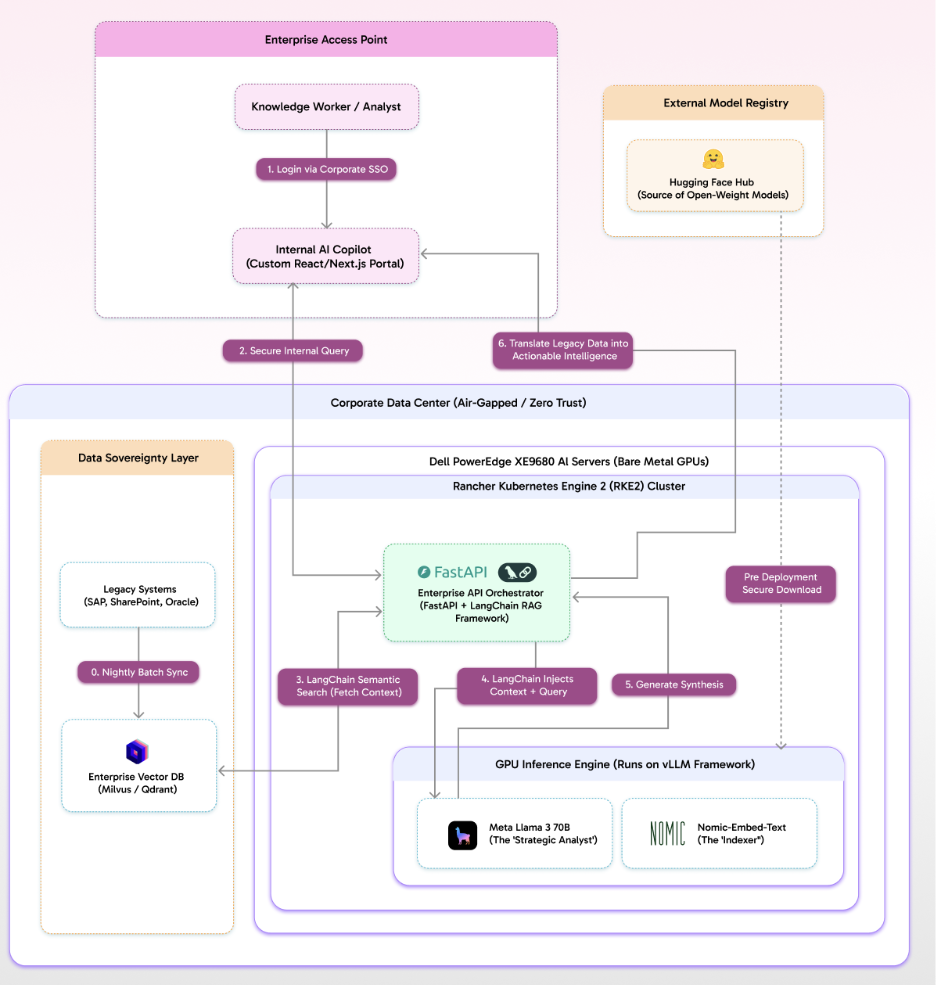

For organizations requiring the utmost security, such as those operating in air-gapped or zero-trust environments, a fully hosted on-premises solution provides absolute data sovereignty. This architecture is built upon a robust hardware foundation of bare-metal GPU servers, such as Dell PowerEdge XE9680s, deployed deep within the corporate data center. The software runtime is managed by a Rancher Kubernetes Engine 2 cluster, ensuring high availability and secure workload orchestration.

This architecture enables secure, sovereign AI operations by first bringing open-weight foundational models into the environment through a pre-deployment secure download from external registries like Hugging Face Hub. To ensure AI models are grounded in corporate knowledge, a dedicated data sovereignty layer performs nightly batch synchronization, pulling information from legacy systems such as SAP, SharePoint, and Oracle into an enterprise vector database like Milvus or Qdrant. This process guarantees that AI models operate with the latest internal data while maintaining full compliance and security.

The workflow begins when a knowledge worker logs into an internal AI copilot portal via corporate SSO. Their query is securely routed to an enterprise API orchestrator, powered by FastAPI and the LangChain RAG framework. This orchestrator performs a semantic search against the vector database to fetch relevant internal context, which it then injects alongside the user’s query into a high-performance GPU inference engine running on the vLLM framework. Within this engine, specialized models handle the workload: Nomic Embed Text processes contextual embeddings, while Meta Llama 3 70B acts as the strategic analyst to generate a comprehensive synthesis.

Finally, the orchestrator translates the processed legacy data into actionable intelligence, delivering it directly to the user’s interface. Throughout this entire process, no proprietary information ever leaves the corporate perimeter, ensuring end-to-end security, compliance, and data sovereignty. This architecture transforms raw enterprise data into actionable insights while keeping all operations fully contained within the corporate infrastructure.

Why Choose Us?

We turn your data into a strategic advantage, combining deep industry expertise with cutting-edge AI solutions. Our team has delivered measurable results for Fortune 500 companies and innovative startups alike, specializing in sectors where compliance and innovation must work together like FinTech, healthcare, and e-commerce. We don’t just build solutions; we integrate with your team, ensuring smooth knowledge transfer and sustainable outcomes. With a global presence and a focus on cost-efficient, scalable technology, we give you the tools to stay ahead.

Our Success Framework

Your success starts with your goals. Our framework is designed to align AI solutions with your north star metric, whether it’s improving efficiency, reducing risk, or enabling real-time decisions. We use stack-agnostic, flexible architectures that adapt to your needs, not the other way around. By focusing on reliable data foundations and AI-driven insights, we turn raw information into actionable strategies. Every step from prioritizing use cases to scaling and governance is structured to deliver measurable, long-term value.

Get In Touch

Let’s discuss how we can help you unlock the full potential of your data, turning complex challenges into clear opportunities and actionable strategies. Whether you’re looking to streamline operations, enhance decision-making, or future-proof your technology stack, our team is ready to collaborate. Reach out at Discovery@analyze.agency or book a consultation directly; we’ll respond within 24 hours to start crafting a tailored roadmap for your success.

With a proven track record across diverse industries and global markets, we bring more than just expertise we bring a partnership mindset. Our hands-on approach ensures you get clear, actionable direction and the support to implement it effectively. From initial discovery to full-scale deployment, we’re committed to making your next strategic move smarter, faster, and more impactful. Let’s build your data-driven future together.

Our latest Blogs

The Future of Intelligent Automation: How AI Is Transforming Businesses

Explore how AI-driven automation is reshaping industries from finance to healthcare and why adaptability, not just automation, is the new competitive edge

Building Scalable Data Systems: The Blueprint for Long-Term Success

A deep dive into what makes data systems truly scalable from architecture design and storage strategies to AI-ready pipelines that evolve with your business.

Cloud Migration Done Right: Lessons from 250+ Projects

Discover the common challenges enterprises face during cloud migration and how to overcome them with the right tools, planning, and DevOps strategy.

From Data to Decisions: The Rise of Predictive Analytics in Enterprises

Learn how companies are using predictive analytics to forecast trends, manage risks, and make smarter decisions with AI-powered insights.

Why Progressive Web Apps (PWAs) Are the Future of Digital Experience

PWAs combine the best of web and mobile offline capability, lightning-fast speed, and smooth UX. Here’s why they’re the next big thing for businesses.

The Power of Document Intelligence: Automating Data Extraction at Scale

Uncover how AI and NLP technologies are transforming document management reducing manual effort and unlocking hidden insights from files and forms.

Powered by Leading Technologies

We leverage proven platforms to ensure scalability, security, and innovation.

What Our Client Says About Us

Analyze Agency transformed our Snowflake warehouse into a efficient data powerhouse.

NBC Team

.webp)

Analyze Agency ‘s team was great to work with and helped us tremendously in gathering relevant data for our clients. We enjoyed working with him and would definitely recommend them.

Hackers Rank Team

Excellent data scientists. Will work with them again in the future.

Moneylion Team

Expert, quick pace, practical.

Upwork Team

The team continually repointed us to focusing on results. They dug into the analysis quickly, understood the context of our business, and worked with us to create actionable items on which they can move forward with. If you want to move fast and work with someone who "gets it", then Chris is the right person for you.

Goli Team

Analyze Agency ‘s was very good, but more importantly, we had a ton of follow-up work and questions, and the team made themselves available at odd hours and were very responsive throughout. They even spotted significant issues that were driving us nuts in our raw data.

Framer Team

Ready to Transform Your Data with AI?

Let’s design intelligent solutions that turn your data into powerful insights. Whether you need scalable pipelines, AI-powered analytics, or strategic consulting our experts are here to help.