Deploying Custom LLMs

Introduction

In an era where artificial intelligence is reshaping every industry, deploying your own custom large language model (LLM) isn't just a technical capability it's a strategic advantage that puts you in control of your AI destiny. Off-the-shelf AI solutions have their place, but they come with limitations of shared infrastructure, data privacy concerns, generic outputs, and dependency on external providers. Custom LLM deployment changes the game by giving you complete data sovereignty, fine-tuned performance for your specific domain, cost predictability as you scale, and the compliance assurance needed to meet regulations like HIPAA, GDPR, or SOC 2 on your terms.

The journey to deploying your own LLM involves critical decisions at every turn. Whether you're leveraging open-source models like Llama, Mistral, or Falcon, or fine-tuning foundation models for specialized tasks, you'll navigate choices around infrastructure (cloud vs. on-premise), orchestration frameworks (vLLM, TensorRT-LLM, Ray), model quantization, GPU selection, inference optimization, vector databases, and API gateway design. The technical landscape can feel overwhelming, but each decision directly impacts your model's performance, reliability, and return on investment.

At Analyze Agency, we don't just help you deploy models we architect AI systems that align with your business objectives, scale with your growth, and maintain peak performance under real-world conditions. We transform complex technical requirements into streamlined deployment strategies, ensuring your custom LLM becomes a powerful asset that delivers measurable business value. Ready to take ownership of your AI infrastructure and unlock the full potential of custom language models?

Deploying Custom LLMs

Bloomberg revolutionized financial intelligence by building BloombergGPT, a 50-billion parameter model trained on decades of proprietary financial documents, dramatically outperforming generic models on specialized tasks like sentiment analysis and regulatory classification. CarMax transformed their automotive retail operation by deploying a custom LLM that generates 45,000 unique vehicle descriptions at scale, eliminating the need for massive content teams while creating SEO-optimized descriptions that traditional dealerships can't match. Morningstar launched "Mo," an AI-powered investment research assistant that cut analyst research time by 30% and report writing time by 50% all while maintaining strict control over data sources to ensure the system exclusively references vetted, proprietary financial information rather than unreliable web content.

These success stories represent a new category of competitive advantage available to organizations willing to take control of their AI infrastructure. We help organizations deploy similar custom LLM architectures through proven frameworks tailored to specific operational and regulatory requirements. Whether your needs demand public cloud hyperscalers for maximum scalability, sovereign European infrastructure for data residency compliance, or fully self-hosted environments for absolute control, each deployment path offers distinct advantages depending on your data sensitivity, performance requirements, and governance mandates.

Technical Architecture

A robust custom LLM deployment requires a carefully orchestrated technical stack that balances performance, scalability, and operational efficiency. Our architecture leverages Kubernetes-based containerization for dynamic GPU allocation and horizontal scaling, implements optimized inference engines like vLLM or TensorRT-LLM for maximum throughput, and integrates vector databases for retrieval-augmented generation tasks. The infrastructure includes comprehensive monitoring through Prometheus and Grafana, automated model versioning with rollback capabilities, API gateways with intelligent caching, and secure secrets management. Whether deployed on AWS, Azure, GCP, sovereign European clouds, or self-hosted infrastructure, the architecture maintains consistent reliability while adapting to your specific latency requirements, compliance mandates, and cost constraints.

Hyperscaler Section

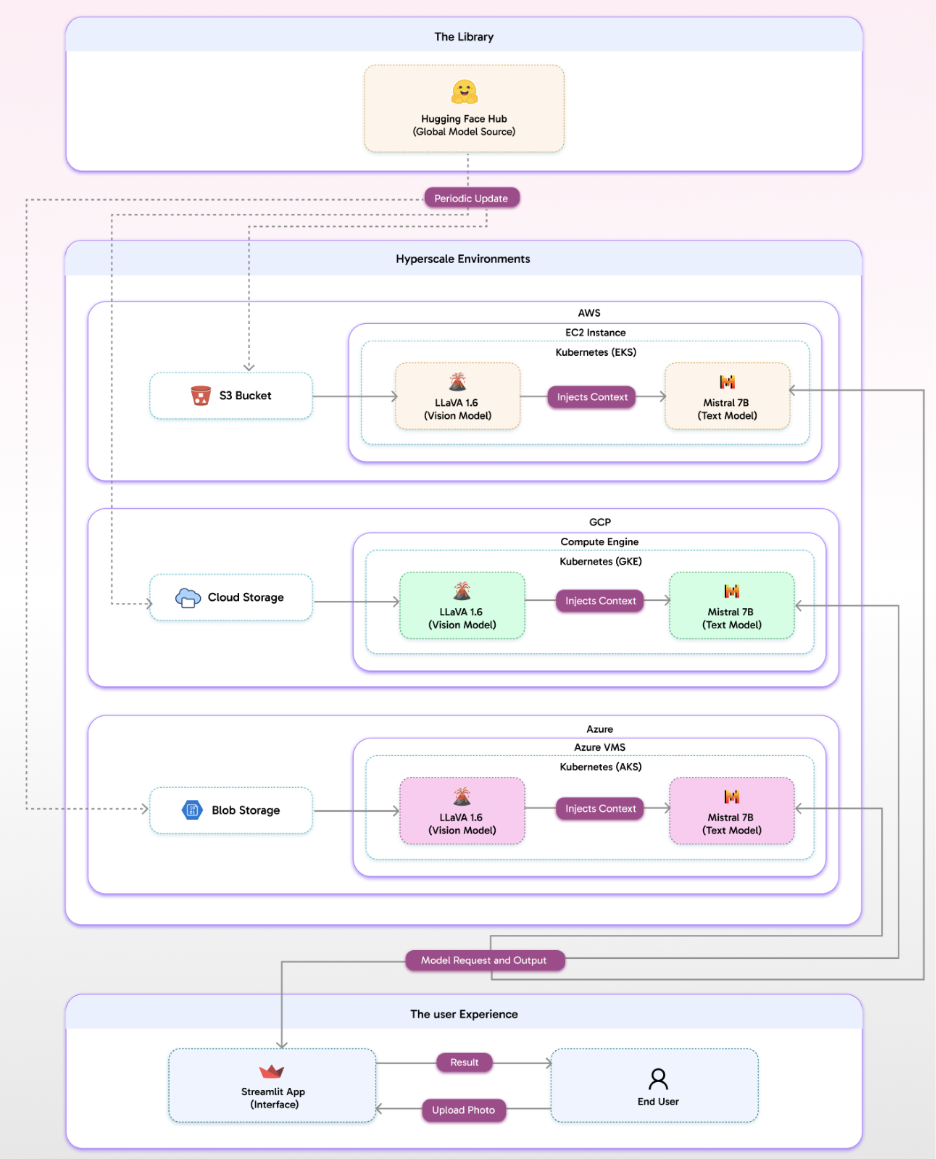

A leading global fashion retailer faced a critical scaling challenge: their merchandising team needed to publish 15,000+ new product listings monthly, but manual photography review and description writing created weeks of delay between inventory arrival and online availability. They deployed a custom AI architecture on AWS using managed Kubernetes (EKS) that transformed their workflow entirely. When warehouse associates now photograph newly arrived items through a mobile app, images flow securely to a private AI cluster where an open-source vision model (LLaVA v1.6) analyzes fabric texture, color accuracy, and styling details, then passes structured context to a fine-tuned text model (Mistral 7B). Within 8 seconds, the system generates brand-aligned, SEO-ready descriptions, applies category tags, and publishes directly to their commerce platform—all while keeping proprietary product data within their controlled cloud environment.

The results were transformative time-to-publish dropped from 12 days to under 24 hours, content production costs fell by 60%, and the merchandising team shifted from repetitive writing to strategic curation. More critically, they now own a proprietary AI capability that improves continuously with their data, creating a sustainable competitive advantage that compounds as their catalog grows. This same architectural approach is delivering similar results across industries logistics companies automating shipment documentation, manufacturers streamlining quality inspections, and healthcare organizations processing medical imaging tasks all while maintaining complete control over sensitive data, regulatory compliance, and intellectual property that defines their market position.

Eurostack Section

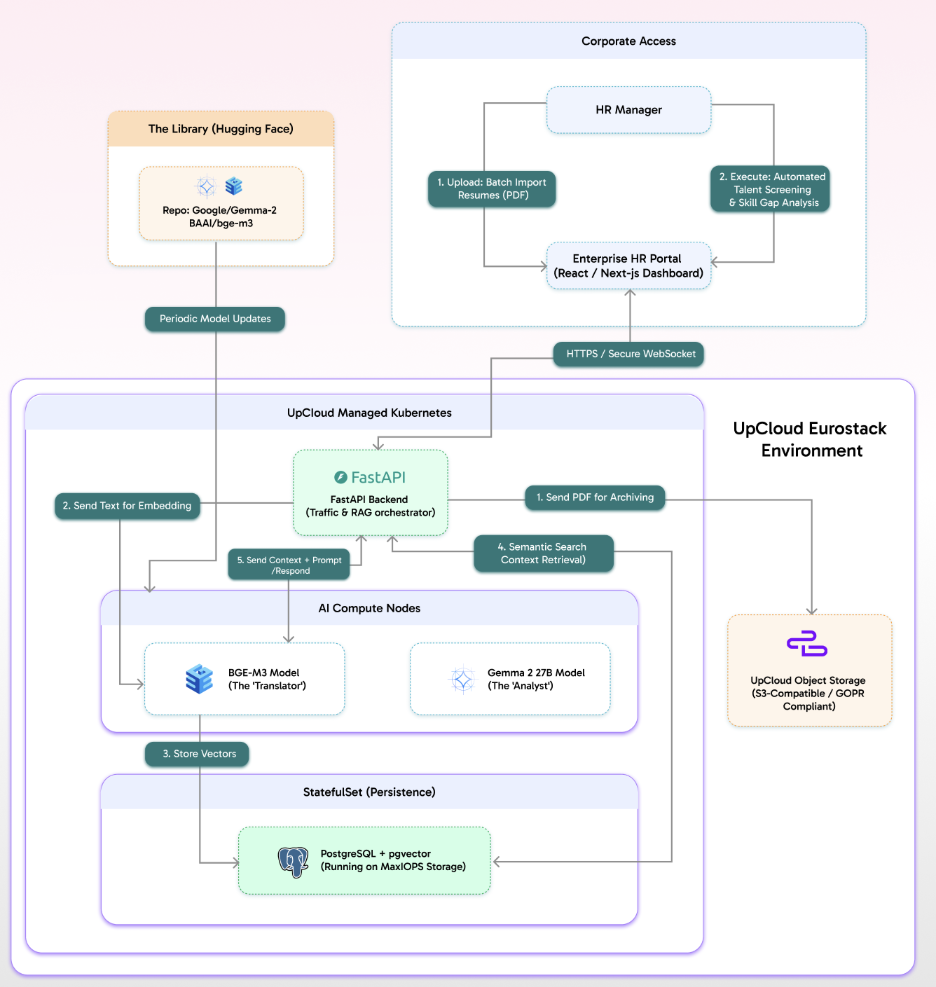

A multinational pharmaceutical company operating across the EU faced a critical challenge: their HR division needed to process thousands of candidate resumes monthly for specialized roles, but strict GDPR requirements and internal data governance policies prohibited sending any employee or candidate information to US-based cloud providers or third-party AI services. Their existing manual screening process created 3-week delays in identifying qualified candidates, and their talent acquisition team spent 60% of their time on initial resume reviews rather than strategic candidate engagement. They deployed a custom AI solution on UpCloud's European sovereign infrastructure, with all compute and storage resources located exclusively in EU data centers. When HR managers upload batch resumes through their enterprise portal, PDFs are securely archived in GDPR-compliant object storage, then processed by a FastAPI backend running on managed Kubernetes. The system uses multilingual embedding models (BGE-M3) to convert resume text into searchable vectors stored in PostgreSQL with pgvector, while a specialized language model (Gemma 2 27B) analyzes candidate qualifications against job requirements and identifies skill gaps all within minutes and with complete audit trails proving data never left European infrastructure.

The results transformed both operations and compliance posture resume screening time dropped from 3 weeks to under 2 hours, talent acquisition costs decreased by 45%, and most critically, the company achieved bulletproof GDPR compliance without compromising AI capabilities. Their legal team now has documented proof that candidate personal data never crosses jurisdictional boundaries, eliminating regulatory risk that could have resulted in millions in fines. This sovereign cloud approach is becoming essential for European financial institutions processing loan applications, healthcare providers analyzing patient records, and government agencies modernizing citizen services any organization where data residency isn't merely a preference but a legal mandate that cannot be compromised for the convenience of US-based AI platforms. By maintaining complete control over infrastructure location and data flows, these organizations gain both competitive AI capabilities and the regulatory certainty that protects their license to operate.

Hosted Section

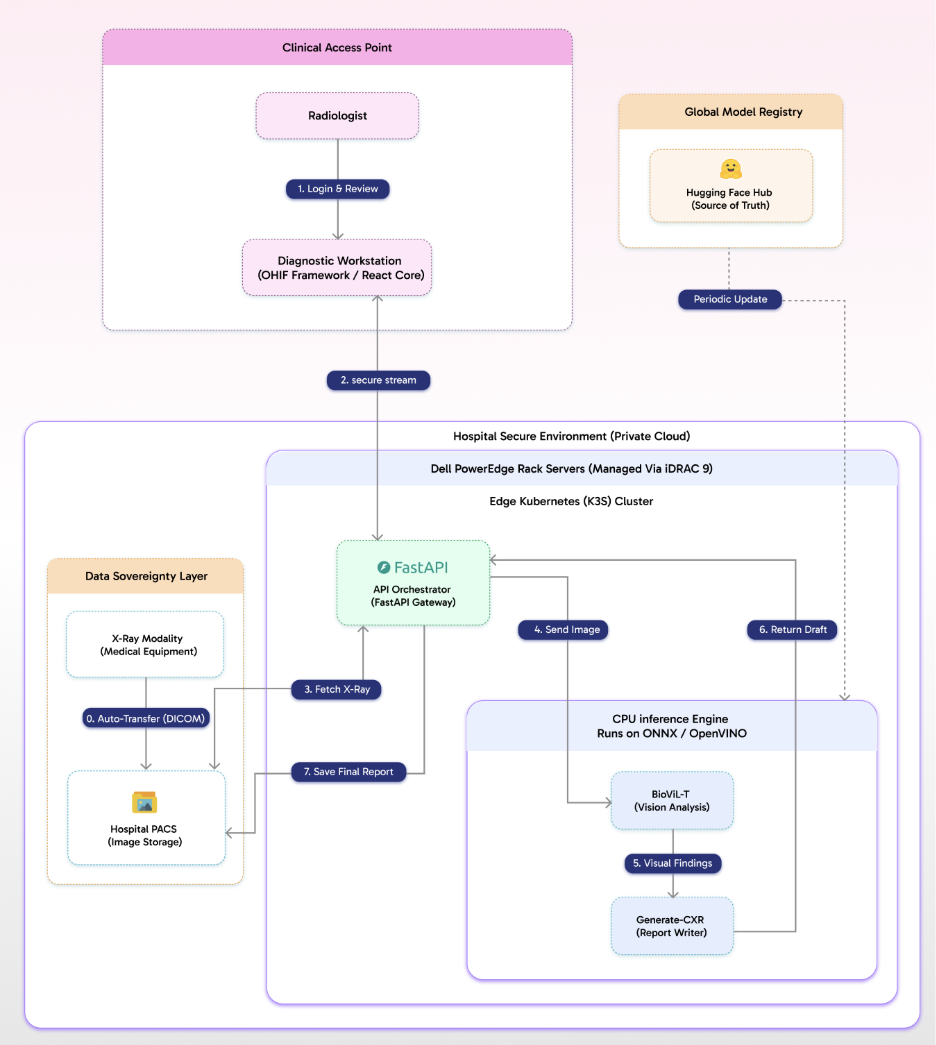

A major regional hospital network in Germany faced an impossible tradeoff: their radiology department needed AI assistance to handle growing imaging volumes and reduce radiologist burnout, but patient privacy laws and institutional policy absolutely prohibited transmitting medical images outside their physical infrastructure even to European cloud providers. Their radiologists were spending 40% of their time writing preliminary reports for routine X-rays, creating dangerous backlogs during peak hours, yet every AI solution they evaluated required uploading patient data to external servers. They needed diagnostic AI that operated entirely within their existing hospital infrastructure, with zero data egress and full integration with their legacy PACS (Picture Archiving and Communication System) that stored decades of patient imaging history.

They deployed a completely self-hosted AI architecture running on Dell PowerEdge rack servers managed through their existing iDRAC infrastructure, with an Edge Kubernetes (K3s) cluster orchestrating the entire workflow behind their hospital firewall. When radiologists access X-ray images through their diagnostic workstation built on the DICOM-compliant OHIF framework, images are automatically fetched from the hospital PACS and streamed securely to a FastAPI gateway. A CPU-optimized vision model (BioViL-T) running on ONNX/OpenVINO analyzes the imaging for preliminary findings—fractures, opacities, anomalies then passes structured observations to a specialized medical report generation model that drafts initial clinical impressions in seconds. The radiologist reviews, edits, and approves the AI-generated draft directly in their workstation, with the final report saved back to PACS. Every component runs on hospital-owned hardware, all models are sourced from Hugging Face and updated periodically without internet connectivity during inference, and patient data never leaves the physical premises. Preliminary report time dropped from 12 minutes to under 90 seconds, radiologist throughput increased by 35%, and the hospital maintained absolute compliance with patient privacy regulations while reducing the cognitive load that was driving physician burnout.

Why Choose Us?

We actively deploy projects and achieve success in America, Canada, the European Union, and APAC. We plug and play with real-world experience vs. unscrutinized adherence to untested benchmarks or polished online positive reviews. Given our experience in seven-plus countries, we take the best of American and European tech and deploy it in APAC pricing. The blend of world-class technology and cost-efficient pricing is one of the advantages that our clients value the most.

Our certified experts operate from all major time zones, and they have gained experience from Fortune 500 companies and startups alike. Some of our experts are true industry pioneers, and they have authored and translated books based on open-source protocols.

Analyze.Agency has a passion for data, FinTech, healthcare, and e-commerce, thereby gaining deep knowledge in these industries. We understand your unique challenges and regulatory needs.

We integrate with your team, ensure smooth knowledge transfer, and drive change as soon as possible with our hands-on and collaborative approach.

Our Success Framework

We understand your strategic AI objectives whether reducing operational costs, accelerating time-to-market, ensuring regulatory compliance, or building proprietary capabilities and architect custom LLM deployments that deliver measurable progress against your specific north star metrics. Our approach provides quantifiable outcomes tied directly to business impact processing time reductions, cost per transaction, compliance audit pass rates, and competitive differentiation that compounds over time. We don't prescribe rigid technology stacks; instead, we design solutions around your operational requirements, existing infrastructure, and risk tolerance, ensuring you maintain flexibility as markets evolve and new opportunities emerge.

Our solutions are intentionally infrastructure-agnostic, allowing your organization to start on hyperscale cloud, migrate to sovereign infrastructure as regulations change, or transition to self-hosted environments as your AI maturity grows. We move beyond generic API integrations and build purpose-trained models fine-tuned on your domain-specific data, industry terminology, and unique tasks. Through continuous model performance monitoring, A/B testing of prompt strategies, and iterative optimization based on production feedback, we create reliable AI infrastructure foundations that deliver actionable intelligence, enable confident team adoption, and transform custom LLM capabilities from temporary technical implementations into lasting competitive advantages that scale with your business.

Get In Touch

Are you sending sensitive data to third-party AI APIs with no control over privacy or cost? Are you struggling with generic models that don't understand your industry terminology or business context? Maybe you're evaluating whether to build custom LLM infrastructure but unsure which deployment path hyperscale cloud, sovereign infrastructure, or self-hosted aligns with your regulatory requirements and strategic goals. Take the first step now, because the organizations gaining competitive advantage aren't waiting for perfect clarity; they're deploying controlled AI capabilities that compound in value with every use. Custom LLM deployment isn't just a technical decision; it's a strategic investment in proprietary intelligence that becomes more valuable as your business scales. Contact us at Discovery@analyze.agency and we'll assess your specific requirements, share proven architectural approaches from similar deployments, and recommend a clear path forward that transforms AI from an operational expense into a lasting competitive asset.

Our latest Blogs

The Future of Intelligent Automation: How AI Is Transforming Businesses

Explore how AI-driven automation is reshaping industries from finance to healthcare and why adaptability, not just automation, is the new competitive edge

Building Scalable Data Systems: The Blueprint for Long-Term Success

A deep dive into what makes data systems truly scalable from architecture design and storage strategies to AI-ready pipelines that evolve with your business.

Cloud Migration Done Right: Lessons from 250+ Projects

Discover the common challenges enterprises face during cloud migration and how to overcome them with the right tools, planning, and DevOps strategy.

From Data to Decisions: The Rise of Predictive Analytics in Enterprises

Learn how companies are using predictive analytics to forecast trends, manage risks, and make smarter decisions with AI-powered insights.

Why Progressive Web Apps (PWAs) Are the Future of Digital Experience

PWAs combine the best of web and mobile offline capability, lightning-fast speed, and smooth UX. Here’s why they’re the next big thing for businesses.

The Power of Document Intelligence: Automating Data Extraction at Scale

Uncover how AI and NLP technologies are transforming document management reducing manual effort and unlocking hidden insights from files and forms.

Powered by Leading Technologies

We leverage proven platforms to ensure scalability, security, and innovation.

What Our Client Says About Us

Analyze Agency transformed our Snowflake warehouse into a efficient data powerhouse.

NBC Team

.webp)

Analyze Agency ‘s team was great to work with and helped us tremendously in gathering relevant data for our clients. We enjoyed working with him and would definitely recommend them.

Hackers Rank Team

Excellent data scientists. Will work with them again in the future.

Moneylion Team

Expert, quick pace, practical.

Upwork Team

The team continually repointed us to focusing on results. They dug into the analysis quickly, understood the context of our business, and worked with us to create actionable items on which they can move forward with. If you want to move fast and work with someone who "gets it", then Chris is the right person for you.

Goli Team

Analyze Agency ‘s was very good, but more importantly, we had a ton of follow-up work and questions, and the team made themselves available at odd hours and were very responsive throughout. They even spotted significant issues that were driving us nuts in our raw data.

Framer Team

Ready to Transform Your Data with AI?

Let’s design intelligent solutions that turn your data into powerful insights. Whether you need scalable pipelines, AI-powered analytics, or strategic consulting our experts are here to help.