Data Engineering

Introduction

In an era where data fuels innovation and competitive advantage, the role of data engineering has never been more critical. Data Engineering is the backbone of modern data infrastructure, it transforms raw data into a scalable, reliable, and actionable asset. It bridges the gap between disparate data sources and the advanced analytics, AI, and real-time insights that drive business success.

Data engineering is more than just building pipelines or managing databases; it’s about architecting systems that ensure data is clean, accessible, and optimized for performance. From designing robust ETL processes to implementing real-time data streaming, data engineers create the infrastructure that empowers organizations to harness the full potential of their data. When executed with precision, data engineering eliminates bottlenecks, reduces latency, and delivers a foundation for analytics and AI initiatives that scale with your business.

At Analyze.Agency, we specialize in crafting data engineering solutions that align with your strategic goals. By leveraging best practices, cutting-edge tools, and a deep understanding of modern data architectures, we help organizations build resilient, high-performance data pipelines. The result? A seamless flow of trusted data that accelerates decision-making, enhances operational efficiency, and unlocks new opportunities for growth.

Data Engineering

In today’s data-driven world, robust data engineering is the backbone of innovation and operational excellence. Companies like Spotify leverage advanced data pipelines to deliver hyper-personalized music recommendations in real time, while Delivery Hero dynamically optimizes logistics and pricing by processing streaming event data at scale. These examples highlight how modern enterprises depend on strong data engineering foundations to power analytics, machine learning, and real-time decision-making across their operations.

Technical Architecture

Designing a data engineering platform is not a one-size-fits-all endeavor. The architecture must adapt to a variety of factors, including data volume, latency demands, compliance obligations, and budgetary considerations. With the industry evolving at a rapid pace, distinguishing between time-tested architectural patterns and fleeting, marketing-driven tools can be challenging.

At Analyze.Agency, we specialize in designing and deploying enterprise-grade data engineering platforms that span multiple regions. Our solutions are built to support high-throughput transactional systems, real-time analytics, and stringent regulatory standards such as GDPR and HIPAA.

In this guide, we explore the most effective and reliable data engineering architectures in use today.

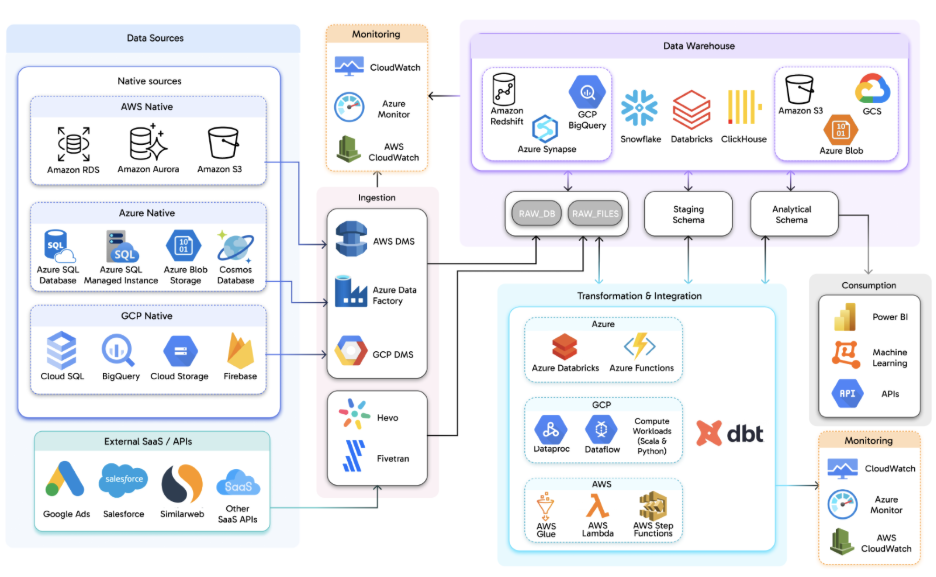

Hyperscalers Solution

The HyperScaler architecture is designed to meet the demands of modern enterprises by enabling high-volume, multi-source data ingestion, elastic processing, and enterprise-grade reliability across cloud providers like AWS, Azure, and GCP. It seamlessly integrates native cloud data sources such as managed relational databases, object storage, and cloud-native services with external SaaS platforms and APIs, creating a unified data ecosystem.

This architecture leverages a hybrid approach for data ingestion, combining managed migration services and third-party platforms to ensure continuous, non-intrusive data flow. Raw data is preserved in raw schemas for replayability, then transformed and enriched using cloud-native compute services, distributed processing engines, and tools like dbt. The data warehouse layer provides scalable analytical storage, supporting both structured and semi-structured data, while built-in observability ensures real-time visibility into pipeline health and system performance.

Ideal for organizations requiring scalability, rapid onboarding of new data sources, and minimal infrastructure management, the HyperScaler architecture future-proofs data infrastructure, making it agile, reliable, and capable of driving innovation in a data-driven world.

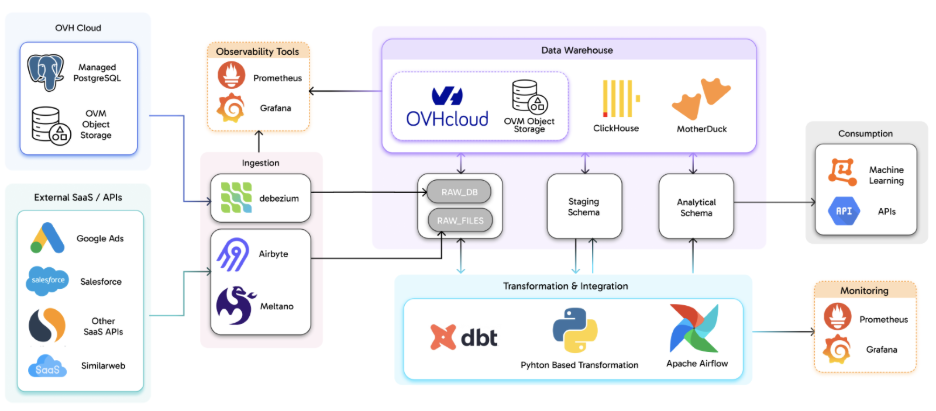

EuroStack Solution

The EuroStack architecture is purpose-built to deliver predictability, transparency, and operational control, making it an ideal choice for organizations prioritizing data sovereignty and compliance. By leveraging Linux-based virtual machines and managed PostgreSQL services hosted in European data centers, the architecture ensures that transactional data remains within GDPR-compliant boundaries from the outset. Object storage serves as a durable, cost-effective layer for raw and intermediate datasets, providing a robust foundation for data residency and long-term storage. This approach not only aligns with stringent regulatory requirements but also establishes a clear, auditable data lineage critical for industries where data governance is non-negotiable.

Data ingestion in the EuroStack architecture is source-aware and flexible, accommodating both real-time and batch workloads. Change Data Capture (CDC) pipelines, powered by tools like Debezium, stream database changes in near real time, ensuring downstream systems remain synchronized with operational data. Simultaneously, open-source frameworks such as Airbyte and Meltano handle the ingestion of SaaS platforms and external APIs, offering organizations the flexibility to integrate diverse data sources without vendor lock-in. Incoming data is written into raw ingestion zones, where it is clearly separated by source type CDC versus SaaS simplifying lineage tracking, schema evolution, and troubleshooting. By keeping raw data immutable, the architecture enables reprocessing as business logic evolves, ensuring long-term adaptability.

At the core of the EuroStack architecture lies a transformation and integration layer built around dbt and Python-based transformations, orchestrated by workflow engines like Apache Airflow. This layer applies validation rules, joins datasets, and produces analytics-ready models, all while maintaining version-controlled and testable transformation logic. Curated data is then promoted into staging and analytical schemas within high-performance engines such as ClickHouse and DuckDB, optimized for fast analytical queries and efficient resource usage. With observability treated as a first-class concern, tools like Prometheus and Grafana provide real-time visibility into pipeline health, ingestion lag, and system performance. This architecture is particularly suited for organizations seeking full control over their data pipelines, predictable infrastructure costs, and strong alignment with regulatory requirements without compromising on performance or scalability.

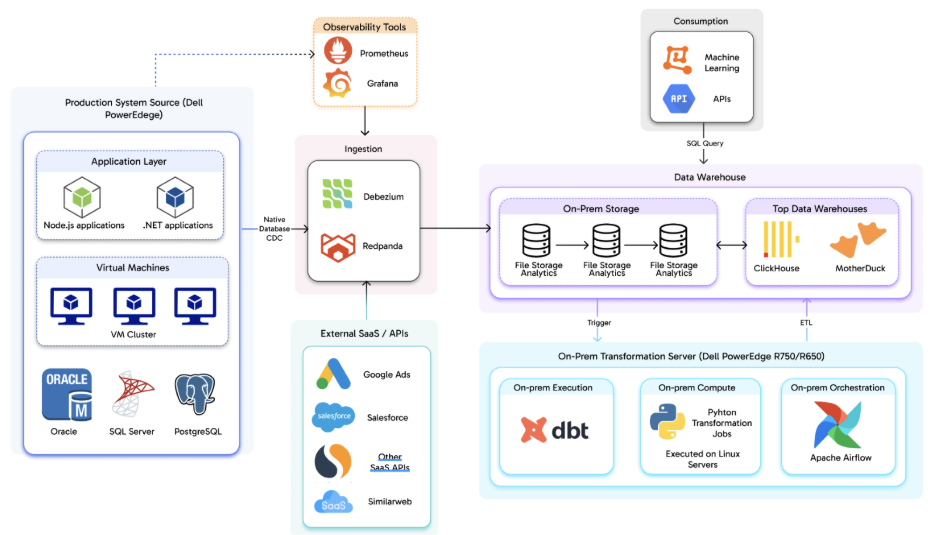

Hosted Solution

The hosted architecture is meticulously designed to provide maximum control, security, and compliance, ensuring that all components are deployed within an organization’s own infrastructure. At its foundation, enterprise-grade servers host application services and transactional databases, fully owned and operated by the organization. This setup guarantees that sensitive data remains securely within controlled environments, addressing critical concerns around data sovereignty and regulatory compliance.

Data ingestion in this architecture leverages self-managed ETL and streaming pipelines, often utilizing Change Data Capture (CDC) and message brokers to replicate changes from operational systems into analytical platforms. This approach seamlessly supports both batch and real-time data flows, while adhering to stringent governance requirements. The analytics and storage layer comprises self-hosted analytical databases and data warehouses, optimized for high-performance querying. Since the infrastructure is dedicated, performance remains predictable and unaffected by the variability of multi-tenant workloads.

The architecture emphasizes internal handling of data transformation, orchestration, and governance, providing teams with full visibility into data lineage, access controls, and audit trails essential for regulated industries. At the consumption layer, on-prem tools deliver APIs and machine learning capabilities, ensuring all data remains within organizational boundaries. This architecture is ideal for industries with strict regulatory, latency, or governance constraints, prioritizing data sovereignty, security, and operational predictability.

Why Choose Us?

At Analyze.Agency, we understand that data engineering is not just about technology it’s about enabling your business to move faster, make smarter decisions, and stay ahead of the competition. Our focus is on reducing the time between data generation and actionable insights, ensuring that your organization can leverage real-time data to drive innovation and operational excellence. We design and implement scalable, observable, and future-proof data engineering platforms that are seamlessly integrated into your core infrastructure not as one-off solutions, but as long-term, maintainable systems that grow with your business. Whether you need real-time analytics, AI-driven insights, or seamless integration across cloud and on-premises environments, our architectures are built to support continuous change, scalability, and reliability.

We leverage cutting-edge technologies such as Change Data Capture (CDC), streaming platforms, and structured orchestration to ensure your data pipelines operate without disrupting production workloads. Our solutions provide full visibility into data flows, failures, and performance, enabling your teams to monitor, troubleshoot, and optimize with confidence. From HyperScaler and EuroStack architectures to fully hosted deployments, we tailor our approach to meet your unique needs, whether you require elastic scalability, strict regulatory compliance, or full control over your data infrastructure. Our expertise spans AWS, GCP, Azure, and mature open-source technologies, ensuring that your data engineering platform is flexible, secure, and built for the demands of modern enterprises.

Adopting a real-time data architecture is a strategic shift that requires more than just technical skills it demands experience, foresight, and a deep understanding of industry-specific challenges. Our team has successfully delivered large-scale data engineering solutions across the US, Canada, and the EU, managing workloads processing billions of records daily for global leaders in gaming, FinTech, healthcare, and digital platforms. We don’t just build systems; we empower your teams through comprehensive knowledge transfer, clear documentation, and operational readiness, ensuring you can independently own, manage, and evolve your data infrastructure. The result? A robust, future-proof data foundation that supports analytics, automation, and AI without introducing unnecessary risk or complexity. Choose Analyze.Agency to turn your data into a strategic asset that drives growth and innovation.

Our Success Framework

At Analyze.Agency, our Success Framework for Data Engineering is built to minimize risk and maximize impact, ensuring your data infrastructure is not just operational, but a strategic asset that drives growth and innovation. We start by aligning data engineering initiatives with your core business objectives, ensuring every architectural decision, whether for scalability, real-time processing, or compliance, supports performance, reliability, and transparency. Before implementation, we conduct a comprehensive assessment of data quality, system dependencies, and existing architectures to identify potential challenges early, establishing a robust foundation for success. Our approach leverages Change Data Capture (CDC), streaming platforms, and modern transformation pipelines to ingest, process, and validate data without disrupting your production environments. We tailor the deployment model, whether HyperScaler, EuroStack, or hosted, to match your specific needs for scale, cost efficiency, and data sovereignty. By embedding observability, automation, and performance optimization into the platform, we ensure seamless operations, while our focus on knowledge transfer and scalable design empowers your teams to manage and evolve the system independently. The result is a resilient, low-latency data ecosystem that accelerates analytics, automation, and long-term innovation.

Get In Touch

Adopting a real-time data architecture is a transformational shift that requires more than just technical expertise it demands deep industry experience and a strategic mindset. Our team has successfully delivered large-scale data engineering solutions across the US, Canada, and the EU, managing workloads processing billions of records daily for global leaders in gaming, FinTech, healthcare, telecom, and digital platforms. We work extensively with AWS, GCP, Azure, and mature open-source technologies, bringing insights from Fortune 500 environments and highly regulated industries to ensure your data infrastructure is secure, compliant, and high-performing.

We prioritize operational readiness and knowledge transfer, empowering your teams to own, manage, and evolve your data systems independently. Our architectures are fully documented, with clear ownership and training to ensure seamless adoption. The result? A resilient, low-latency data foundation that supports analytics, automation, and AI without unnecessary risk. Reach out to us at Discovery@analyze.agency to start building your future-proof data engineering solution today.

Our latest Blogs

The Future of Intelligent Automation: How AI Is Transforming Businesses

Explore how AI-driven automation is reshaping industries from finance to healthcare and why adaptability, not just automation, is the new competitive edge

Building Scalable Data Systems: The Blueprint for Long-Term Success

A deep dive into what makes data systems truly scalable from architecture design and storage strategies to AI-ready pipelines that evolve with your business.

Cloud Migration Done Right: Lessons from 250+ Projects

Discover the common challenges enterprises face during cloud migration and how to overcome them with the right tools, planning, and DevOps strategy.

From Data to Decisions: The Rise of Predictive Analytics in Enterprises

Learn how companies are using predictive analytics to forecast trends, manage risks, and make smarter decisions with AI-powered insights.

Why Progressive Web Apps (PWAs) Are the Future of Digital Experience

PWAs combine the best of web and mobile offline capability, lightning-fast speed, and smooth UX. Here’s why they’re the next big thing for businesses.

The Power of Document Intelligence: Automating Data Extraction at Scale

Uncover how AI and NLP technologies are transforming document management reducing manual effort and unlocking hidden insights from files and forms.

Powered by Leading Technologies

We leverage proven platforms to ensure scalability, security, and innovation.

What Our Client Says About Us

Analyze Agency transformed our Snowflake warehouse into a efficient data powerhouse.

NBC Team

.webp)

Analyze Agency ‘s team was great to work with and helped us tremendously in gathering relevant data for our clients. We enjoyed working with him and would definitely recommend them.

Hackers Rank Team

Excellent data scientists. Will work with them again in the future.

Moneylion Team

Expert, quick pace, practical.

Upwork Team

The team continually repointed us to focusing on results. They dug into the analysis quickly, understood the context of our business, and worked with us to create actionable items on which they can move forward with. If you want to move fast and work with someone who "gets it", then Chris is the right person for you.

Goli Team

Analyze Agency ‘s was very good, but more importantly, we had a ton of follow-up work and questions, and the team made themselves available at odd hours and were very responsive throughout. They even spotted significant issues that were driving us nuts in our raw data.

Framer Team

Ready to Transform Your Data with AI?

Let’s design intelligent solutions that turn your data into powerful insights. Whether you need scalable pipelines, AI-powered analytics, or strategic consulting our experts are here to help.